The Centers for Medicare and Medicaid Services (CMS) recently announced plans to add more measures to their Dialysis Facility Compare (DFC). The DFC is an online tool that allows users to search and compare dialysis facilities within a certain area based on ratings and certain measures. The added measures would examine fluid management, the rate of blood stream infections in in-center hemodialysis patients and pediatric peritoneal dialysis adequacy. Data collected through the Consumer Assessment of Healthcare Providers & Systems In-Center Hemodialysis (CAHPS ICH) surveys would also be added to the DFC. DPC submitted comments to CMS supporting the addition of these measures but offered suggestions on how to improve the measure using CAHPS survey data.

The CAHPS ICH data comes from patient feedback, but DPC is concerned that the resulting measures are too few and do not sufficiently drill down into specific aspects of care that patients think are important. Suggestions include having the CAHPS administrators develop an improved set of survey topics and having a panel of patients provide feedback and insight.

CMS also announced in a recent call with stakeholders that it hopes to develop “patient-reported outcome measures” for dialysis. Examples of patient-reported outcomes include whether a patient experienced cramping or a washed-out feeling after a dialysis treatment.

Read DPC’s letter to CMS below:

Elena Balovlenkov, R.N.

Technical Lead for Dialysis Facility Compare

Centers for Medicare & Medicaid Services

7500 Security Boulevard

Mail Stop S3-02-01

Baltimore, MD 21224

Re:Addition of New Measures to Dialysis Facility Compare

Dear Ms. Balovlenkov:

DPC appreciates the opportunity to comment on the four measures CMS is considering adding to DFC in 2016. We support the reporting of measures on bloodstream infection, patient experience, fluid management, and pediatric peritoneal dialysis adequacy. We applaud the agency for expanding this transparency tool. We would like to comment further on the subject of the CAHPS measures.

We are happy to see that ICH CAHPS scores are in line to be reported on DFC. These surveys give patients the opportunity to offer feedback on the experience of receiving care. However, we do have two matters to bring to your attention.

We are concerned that the composite measures approved by NQF may aggregate too many factors. ICH CAHPS measure #5 includes answers to 17 questions and #6 includes answers to 9 questions, and both encompass a wide range of topics. For instance, #5 covers such diverse issues as physical comfort, staff listening/respect, privacy, pain management, timely start, cleanliness, dietary advice, and explaining blood tests. Measure #6 also includes answers to questions on privacy and patient education in addition to information on treatment modalities.

We wonder if the composites are granular enough to, from the consumer perspective, give specific enough information about the dimensions an individual patient might care about and, from the provider perspective, give specific enough information to spur improvement. We note that Hospital Compare reports 11 CAHPS measures culled from 25 questions, while dialysis CAHPS reports just 6 measures from 44 questions. For Hospital Compare, information from CAHPS about facility cleanliness and pain management is broken out separately, not lumped into a larger composite.

Comparing measure testing submissions to NQF from the developers of the ICH CAHPS composites and the H-CAHPS composites did not turn up enough information to clarify why H-CAHPS yielded .44 measures per survey question while ICH CAHPS yielded just .14 measures per survey question. Two possibilities are worth investigating: Did ICH CAHPS developers hold their composites to a higher reliability standard than H-CAHPS developers did? Or were the ICH CAHPS developers insufficiently creative in exploring possible iterations of measure structures?

We would request that CMS ask the ICH CAHPS team to take another run at creating an expanded measure set; and further, that a panel of patients be involved in articulating what types of measures are important to them. We realize that it will take a long time to develop and gain approval for new measures and hope that such a project can begin forthwith. May we suggest, as a first step, that CMS facilitate a meeting between stakeholders and the ICH CAHPS team to help us understand how ICH CAHPS and H-CAHPS yielded different measure structures?

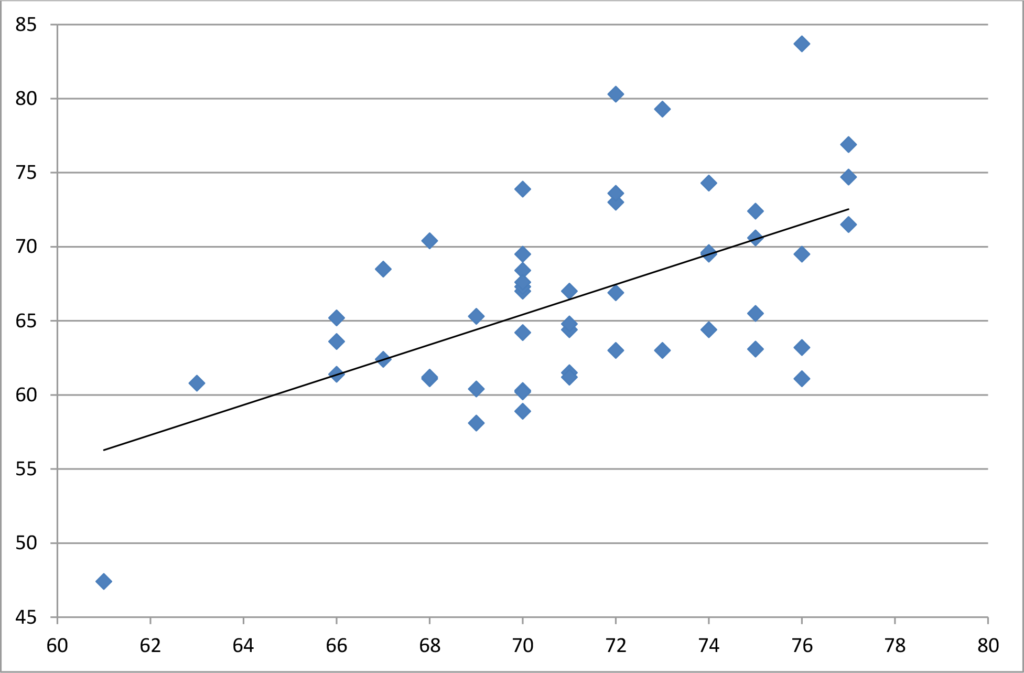

In October CMS released the first state-by-state compilation of dialysis CAHPS scores. As indicated in the scatterplot below, plotting these scores against hospital CAHPS scores at the state level found that about 32% of the variation in one care setting can be explained by the variation in the other care setting—meaning that the scores differentiate patient satisfaction, but about 1/3 of the variation simply measures people’s general attitudes in a particular state. (Hospital scores are on the horizontal axis, dialysis facilities on the vertical.) Previous research on geographic variations in H-CAHPS scores found variability correlated with population density, and that pattern seems to hold true with ICH CAHPS as well. The pattern disfavors places with higher population density, such as DC, NY, NJ and MD which are clustered at the bottom left of the scattergram.

At the risk of sounding like a broken record, we must caution that it is not valid to compare satisfaction scores at the national level. Hospital Compare first shows a hospital’s ratings compared to the statewide average, which is helpful, but hospital star ratings and value-based purchasing adjustments will be regionally biased. Of greater concern is that the pattern is somewhat similar to ESRD outcome measures meaning that western, upper Midwest and New England states generally have higher satisfaction rates. As such, if CAHPS scores are added to outcome measures in nationwide tournaments in DFC star ratings and the QIP, the existing geographic skewing will be reinforced.

Respectfully submitted,

Hrant Jamgochian, J.D., LL.M.

Executive Director

cc: Joel Andress, Ph.D., Center for Quality Measurement in the Health Assessment Group, Kate Goodrich, M.D., Director of the Quality Measurement and Health